Your Smartphone Can Tell If Your Partner Is Cheating (And Not By Reading Their Texts)

This post originally appeared on The Good Men Project.

Think of a mood ring—if you’re old enough to remember them—powered by a state of the art silicon chip. That’s the concept of artificial emotional intelligence, (AEI), which sits at the intersection of psychology and technology. Mood rings were just crude liquid crystal thermometers that changed the color of stones, and there’s no proven link between body temperature and mood. But the science of interpreting facial expressions, of linking a blink or a curl of the lip to a feeling, such as anger, or an action, such as lying is strongly supported by psychologist Paul Ekman‘s research on microexpressions, which formed the basis of Fox’s recent crime drama Lie to Me, starring Tim Roth. Dr. Ekman learned and in turn taught humans how to read faces, but AEI enables technology to take over that job. AEI goes way beyond Siri, Apple’s robotic, monotone companion, who registers no emotions—hers or yours. AEI is Siri on steroids, as in, your phone reads your mood with its front-facing camera and uses it to help you. And it could just be the next big performance booster—the equivalent of emotional Viagra.

Perhaps the greatest of all human needs—and the hardest to satisfy—is our desire to be understood, to be with someone who gets us so we’re not constantly explaining. Ideally we want that someone to be human. But personal technology already does so much for us, and our phones are fast becoming like third hands and extensions of our brains. Would a device that understands us completely dampen our desire for sympathetic companionship, or would its unflinching honesty teach us to be better partners in our personal and professional relationships?

Intrigued by the theory of AEI? Here are some ideas on how it might work in practice:

Let’s say you’re typing a romantic text to your partner, but your phone sees visual cues that you’re feeling hostile. Perhaps it offers a telltale beep, or flashes a cognitive dissonance alert, or inquires in a concerned voice, “Thomas, are you feeling anger towards Kim right now?” Would you find the feedback freaky or fabulous? Intrusive or illuminating? Perhaps you would love your phone for improving your life (and your chances for romance). Maybe would you feel like you were using a crutch to fake it—or maybe you wouldn’t care. People hire editors all the time to fix their writing, use Photoshop to enhance images, and auto correct to eliminate typos (when it’s not introducing new errors). We also carefully craft our online personas for what we believe other people want to see. But a device that reads our moods and, if we allow it, automatically adjusts and recalibrates for them? Personally, I might be willing to try it, but I think I would have a hard time trusting it, and I’d need the option to review and override. The thing is, though, as I learned the hard way through two marriages, we’re often unconscious of our true states of mind, making us our own worst enemies when it comes to communication and behavioral choices. So are we better off trusting our read on ourselves or the phone’s “objective” take on our faces? One concern I have is that we might become dependent on our devices to correct our misfires and mask our shortcomings. I worry that this might leave us less willing to relate in person instead of helping us be more aware when speaking face to face.

Another possible application of AEI is using it to practice. Technology companies, such as start up t3 interactive, are leveraging the Wii’s ability to read gestures to create new learning applications that provide feedback on body language and other presentation skills. The product stands to benefit anyone who has to make a pitch in front of an audience. But take the idea a few sci-fi steps further. Imagine a “sincerity meter” on your smartphone that lets you know whether you’re coming across as genuine. It might help you become a better communicator—with the side effect of enabling psychopaths to become more convincing. My worry here is that our phones might become portable polygraphs, available to everyone at any time. Say you come home late from a business dinner—your fourth in as many weeks—and your partner asks if you’re having an affair. You deny, and your partner says, “Talk into the phone.” Would the phone make us more accountable and less likely to cheat, or would you dump a partner who demanded such an Orwellian honesty test?

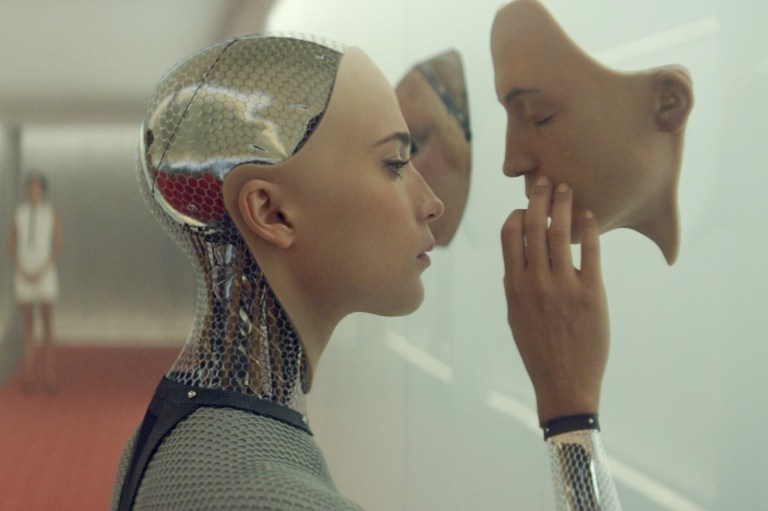

Questions of how far technology should go, and whether society is prepared to accept its advances, have been around for a long time. Think about cloning and other developments in genetic engineering that might enable us to “select” super-healthy or super-intelligent children, as teased in Aldous Huxley’s Brave New World. Could a phone with AEI detect a terrorist or prevent a suicide by reading the user’s mental state, or catch people engaging in unethical behavior? And would the government use this development to monitor its own citizens? The pursuit of transparency cuts both ways. The ubiquitous presence of mood readers could easily force us to be more forthright, but it could also motivate us to hide behind masks. ![]()